Understanding the absolute risk formula can significantly elevate the precision of healthcare predictions and interventions. This metric is a straightforward yet profoundly insightful tool, measuring the probability of an event occurring within a specified time frame. By isolating the impact of a specific factor, it facilitates better-informed decision-making.

This article delves into the absolute risk formula, providing practical insights and real-world examples to elucidate its importance in medical and public health contexts. From dissecting its core components to examining its application in predictive analytics, you'll gain a robust understanding of this vital concept.

Core Components of Absolute Risk

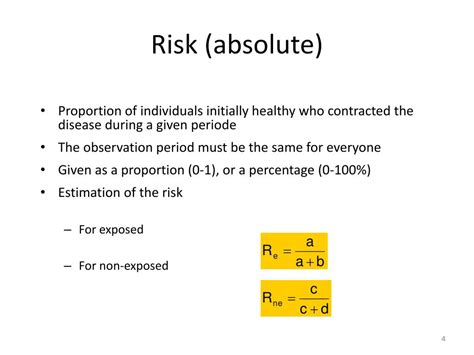

At its essence, absolute risk measures the likelihood of a specific event, such as a disease or condition, occurring within a defined population over a set period. It’s calculated by dividing the number of new cases of an event by the total number of individuals in the population during a given time period. This formula typically appears as: Absolute Risk = (Number of New Cases / Total Population at Risk) * 100 to express the risk as a percentage.

Practical Applications of Absolute Risk

One of the most compelling uses of the absolute risk formula is in clinical trials. Researchers use it to compare the risk of an outcome in the treatment group versus the control group. For instance, in a trial examining the efficacy of a new drug, the absolute risk reduction (ARR) is a critical metric. If the absolute risk of adverse side effects is 10% in the treated group compared to 20% in the control group, the ARR is 10%, indicating a significant benefit of the treatment. This data enables physicians to weigh the benefits and risks accurately.

Key Insights

- Absolute risk offers a clear probability measure for specific events, enhancing precision in healthcare.

- When comparing treatment efficacy, the absolute risk reduction can highlight significant benefits or risks.

- Applying the absolute risk formula in public health can lead to more targeted interventions.

Comparing Absolute Risk with Relative Risk

Absolute risk should not be confused with relative risk. While absolute risk provides the raw probability, relative risk compares the probability of an event occurring in the exposed group to that in the non-exposed group. For example, if the absolute risk of heart disease in a high-cholesterol group is 20% and in a low-cholesterol group is 10%, the relative risk would be 2.0. This comparison helps healthcare providers understand the impact of specific exposures, like diet and lifestyle, on health outcomes.

Using Absolute Risk in Public Health Policy

Public health officials rely on absolute risk data to develop strategies for disease prevention. For instance, understanding the absolute risk of smoking-related cancers can guide smoking cessation programs and health policy. If the absolute risk of lung cancer in smokers is 15% compared to 1% in non-smokers, this stark difference underscores the necessity of smoking cessation initiatives. These targeted efforts can significantly reduce the overall absolute risk within the population.

What are the limitations of the absolute risk formula?

While the absolute risk formula provides clear, actionable data, it may not always account for individual variability. Other factors such as genetic predispositions, lifestyle choices, and concurrent health conditions can influence risk, sometimes rendering absolute risk data less personalized.

How can absolute risk be improved for predictive analytics?

To enhance predictive analytics, combining absolute risk with other metrics like relative risk, and incorporating demographic and lifestyle data, offers a more comprehensive risk assessment. Advanced machine learning models can integrate these variables, providing nuanced and personalized risk predictions.

In summary, the absolute risk formula is an invaluable tool for precision in healthcare and public health. By dissecting real-world applications and understanding its role in both clinical and policy-making contexts, professionals can harness this metric to improve outcomes and inform critical decisions.