Mastering Conditional Independence in Data Science

Understanding conditional independence is crucial for any data scientist. It allows you to make more informed decisions by reducing the complexity of relationships between variables. This guide aims to provide you with step-by-step guidance, practical solutions, and actionable advice to master conditional independence, offering real-world examples and tips to navigate this complex subject.

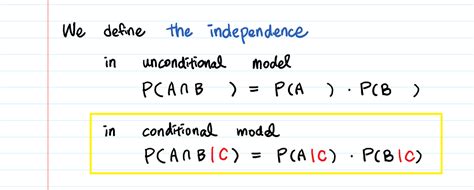

In data science, conditional independence refers to the idea that given some variables, other variables are not related. Formally, variables A and B are conditionally independent given variable C if knowing the value of C renders A and B statistically independent. This concept is key in simplifying models, particularly in Bayesian statistics, graphical models like Bayes networks, and other machine learning algorithms.

Getting Started with Conditional Independence

Conditional independence helps in breaking down complex relationships in your data. When you model your data, knowing that certain variables don't have an impact on others (conditional on some third variable) allows you to reduce the complexity of your models. For example, if a person’s salary (A) is conditionally independent of their education level (B) given their years of experience (C), then including 'experience' in your model can simplify your analysis while maintaining accuracy.

By grasping conditional independence, you can focus on the most relevant variables, improve model performance, and avoid the pitfalls of multicollinearity. Here’s a quick start guide to get you familiar with key concepts:

Quick Reference

- Immediate action item with clear benefit: Identify variables that might not impact your target variable, given the presence of a third variable.

- Essential tip with step-by-step guidance: Use Bayes’ theorem to determine conditional independence; the formula P(A|B) = P(B|A)P(A)/P(B) can help assess how information changes in the presence of another variable.

- Common mistake to avoid with solution: Don’t overlook the impact of hidden variables; always check if the relationships you observe are truly independent or if another hidden variable is at play.

Understanding Bayes’ Theorem and Conditional Probability

At the heart of conditional independence lies Bayes’ Theorem. This theorem forms the backbone of many Bayesian statistical techniques and machine learning algorithms. To apply it effectively, follow these steps:

- Start by defining the probability of variable A given variable B: P(A|B).

- Determine the prior probability of A: P(A).

- Find the likelihood of B given A: P(B|A).

- Calculate the marginal likelihood of B: P(B).

- Use Bayes’ formula: P(A|B) = P(B|A)P(A)/P(B) to compute conditional probabilities.

Let’s say you're working on a fraud detection system. If you want to find the probability of a transaction being fraudulent (A) given that it’s classified as suspicious (B), Bayes’ theorem can provide the necessary insight. With prior knowledge of the likelihood of suspicious transactions and the frequency of fraud, this formula can help you determine the probability more accurately.

Here’s a step-by-step example:

Suppose you know the following:

- P(A): Probability of a transaction being fraudulent, say 0.001.

- P(B): Probability of a transaction being classified as suspicious, say 0.1.

- P(B|A): Probability of a transaction being suspicious given that it’s fraudulent, say 0.8.

Using Bayes’ formula:

P(A|B) = P(B|A)P(A)/P(B)

Substitute the values:

P(A|B) = (0.8 * 0.001) / 0.1 = 0.0008 / 0.1 = 0.008 or 0.8%

This result tells you that if a transaction is classified as suspicious, there is only an 0.8% probability that it’s actually fraudulent.

Graphical Models and Conditional Independence

Graphical models like Bayesian Networks and Markov Chains are often used to depict complex relationships between variables and represent conditional independence visually. Let’s break down how to construct and interpret these models:

- Create a directed acyclic graph (DAG) where nodes represent variables and edges represent dependencies.

- Check for conditional independence: If a variable X is independent of another variable Y given a third variable Z, ensure there is no active path between X and Y when conditioning on Z.

- Implement the model: Use software like R or Python libraries (e.g., PyMC3, TensorFlow Probability) to build the graphical model and perform simulations.

For example, suppose you have a dataset where you need to model how students' performance (A) depends on their study habits (B) and their natural abilities (C). If you know that students' performance is conditionally independent of their natural abilities given their study habits, you can set up a DAG with A, B, and C, ensuring there’s no direct path between A and C when B is known.

Advanced Techniques in Conditional Independence

Once you’ve mastered the basics, it’s time to delve deeper into more advanced methods and tools:

- Sensitivity analysis: Evaluate how changes in one variable affect another conditional on a third. This can identify the robustness of your assumptions.

- Statistical tests: Use tests like the Chi-Square test or G-test to determine if two variables are conditionally independent given a third.

- Machine learning techniques: Implement algorithms like LASSO or Ridge regression, which inherently assume some level of conditional independence to reduce overfitting.

For instance, in a health study examining the impact of diet (A), exercise (B), and genetics (C) on overall health (D), you might use LASSO regression. This algorithm will help you determine which variables remain significant predictors of health status, assuming a degree of conditional independence based on the model’s penalty terms.

Practical FAQ

What are common mistakes when applying conditional independence?

A frequent oversight is overlooking hidden confounding variables. Always check if relationships observed between two variables are truly independent or if there’s an underlying, unaccounted variable influencing them. Another mistake is misapplying conditional independence in overly complex models without justification; use conditional independence to simplify rather than complicate.

How do I test for conditional independence?

You can use statistical tests like the Chi-Square test or G-test to evaluate conditional independence. For example, to test if diet (A) is conditionally independent of exercise (B) given genetics (C), you could perform a Chi-Square test on the frequency table of the observed and expected values for combinations of diet, exercise, and genetics. If the test statistic is less than the critical value from the Chi-Square distribution, the variables are conditionally independent.

Can conditional independence apply in time-series analysis?

Yes, conditional independence can be applied in time-series analysis, but it often involves lagged dependencies. For instance, in an economic model, current economic growth (A) might be conditionally independent of past growth (B) given current policy decisions (C). To check this, you’d apply techniques like Granger causality tests to see if current economic growth is predictable by past growth conditioned on current policies.

In conclusion, mastering conditional independence is pivotal for refining your data models and making more accurate predictions. From using Bayes’ theorem to setting up complex graphical models, the principles outlined in this guide will help you navigate conditional independence effectively, reducing model complexity and improving accuracy in your data science projects.